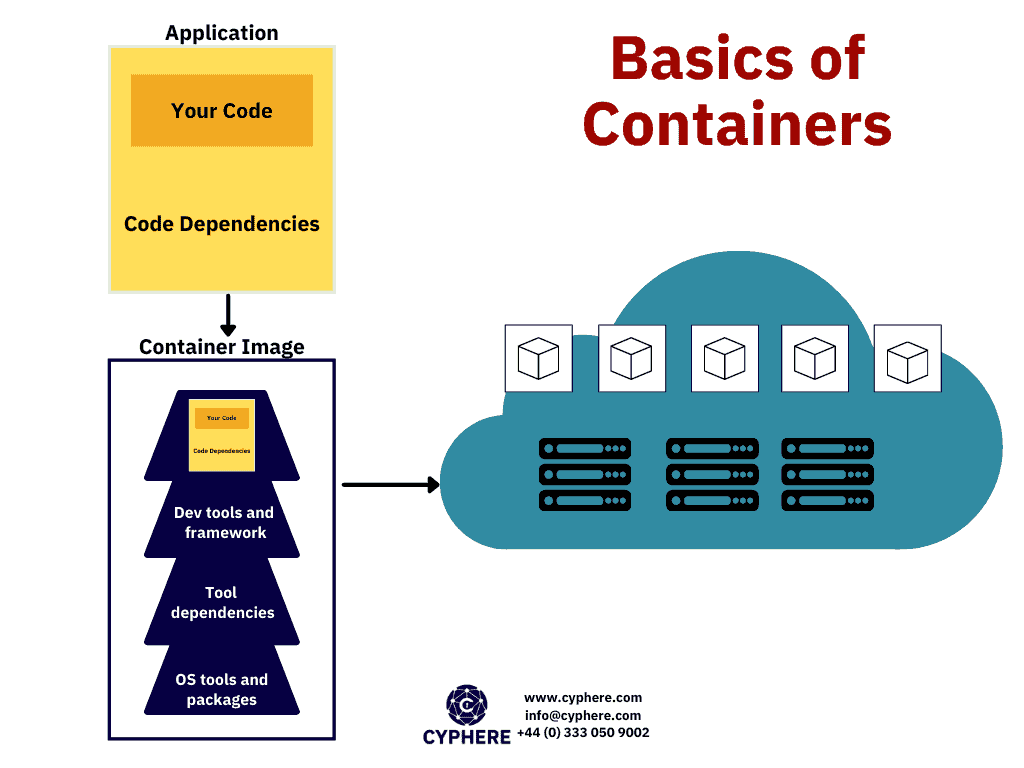

A container is defined as packaged software that contains all the code and dependencies of an application within a virtual environment so that the application can be migrated from any computing device to another. Docker container images are executable software packages that are lightweight and standalone.

In today’s modern era of technology, the ability to run an application in multiple computing environments without having to modify application code, tools, dependencies or configuration settings is of paramount importance.

Container images convert into runnable containers on runtime when they execute on the Docker engine. Another beauty of Docker containers is that the containerised software executes in the same way regardless of the base operating system i.e. (Windows or Linux).

This is because containers isolate the software from the base OS environment and ensure its operability throughout all computing environments. The existence of containers has resulted in the evolution of software development and testing by allowing developers to continuously integrate and deploy code in staging and production releases.

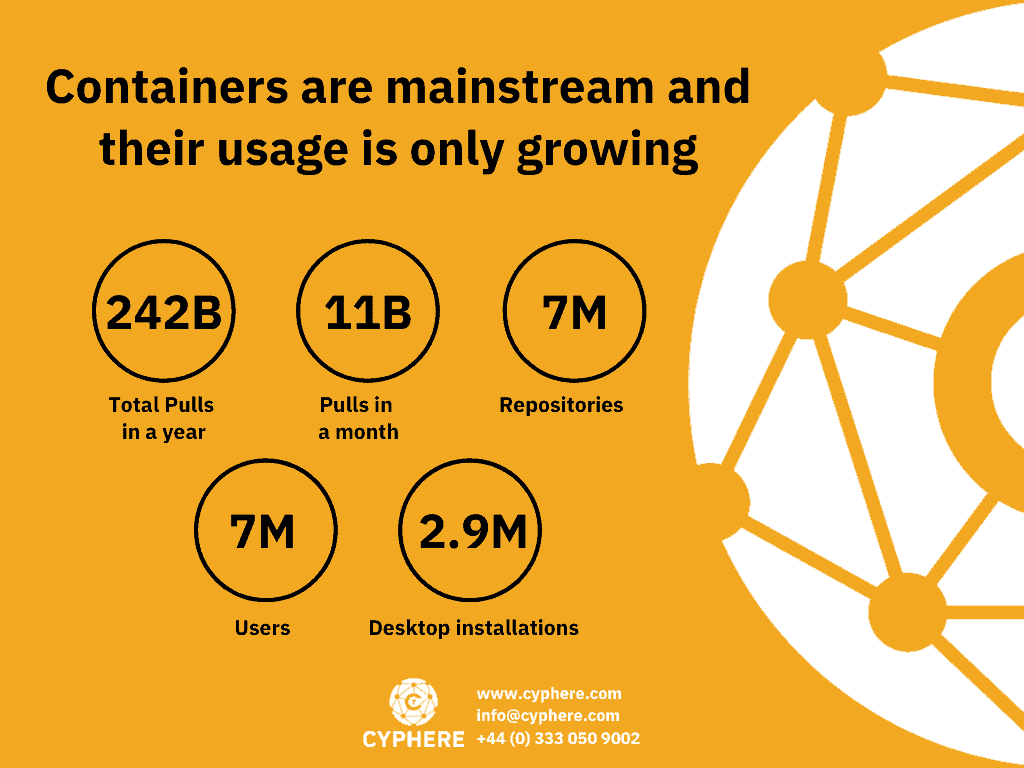

As explained above, containerisation has numerous benefits. As a result, many organisations around the globe have adopted containerisation in their development processes. In fact, Gartner predicted in 2020 that more than 50% of organisations around the world will have applications running within the docker container.

However, this massive adoption of docker containers also creates opportunities for malicious adversaries to compromise organisations by exploiting these containers. Docker containers can be a single point of compromise for an organisation. So it is essential that docker container operators ensure secure configuration and integrate security in all stages so there are no loopholes for adversaries to exploit.

Container terminology to know

Before diving into the basics of container security there are a few core terminologies that you should understand as these might be frequently used when talking about containers.

Containers

A container is a basic and standard image of a software package. A container contains the code and all dependencies that the application needs to run in a lightweight manner. This is a standalone and executable package that includes everything the application needs to execute properly as an isolated process from a shared kernel.

Containers can be understood as an abstraction of the application layer, just like virtual machines are an abstraction of the hardware layer.

Docker

Dockers are a set of Platform-as-a-Service (PaaS) products, they use OS-level virtualisation to deploy containers.

Kubernetes

Kubernetes is an open-source container orchestration system that provides users with management and automation functionalities to help in container operations and deployments.

Pod

When one or more containers are grouped together with a shared host, storage and specifications it is called a Pod.

What is securing containers?

Container security is the study or process of ensuring information security controls of containerised software. There are many security factors that need to be taken into consideration such as the robustness of the container image, the container that will execute the image, the docker configuration on the operating system.

Understanding the container issue

One major challenge that arises when securing container security is that it differs a lot from traditional security. There are numerous areas within the container that an attacker can use to exploit the container and the underlying operating system. The attack surface ranges from applications running within the container to the infrastructure it is running on.

Example scenario 01

Consider that a container image is used as a baseline for creating other images. If the security of the base image is not hardened, the derived images will suffer from the same security vulnerabilities or misconfigurations.

Example scenario 02

While containers behave like virtual machines, they differ in a lot of ways from them. For example, the network communication of virtual machines is routed via perimeter routers or firewalls. While in the case of a docker container, the traffic may only exist between applications and the container. However, we still need to monitor the network traffic of these dockers to see if any malicious traffic is being routed between the application and its container.

Why is container security important?

Container security is as important as traditional systems security. The reason is that the compromise of both can result in violation of the CIA triad i.e. Confidentiality, Integrity, Availability. If an e-commerce application is running within a container, and the container is compromised. The sensitive data of customers will be put at risk causing the organisation financial and reputational damage.

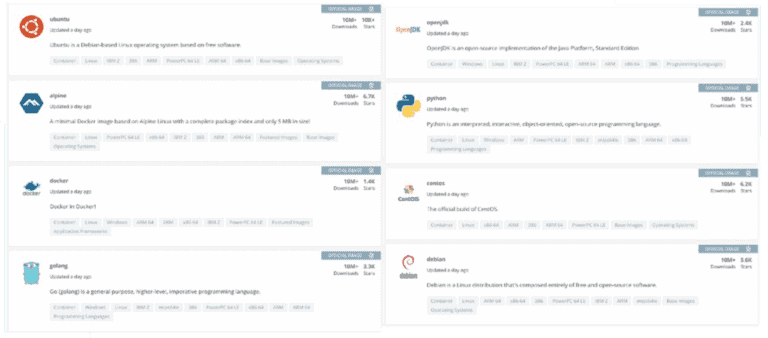

Docker made the use of containers easy for the community by providing a centralised platform like DockerHub. DockerHub is a platform where the docker community publishes their container images. The number of published container images has grown dramatically over the past years.

So docker users tend to use these published container images as a baseline rather than creating their own from scratch. The problem with this approach is that these container images become outdated over time and may contain numerous security misconfigurations and vulnerabilities. Moreover, the images could have been infected with backdoors by malicious actors.

How to create secure container images

As discussed earlier, securing a container requires attention to multiple areas. The responsible personnel include developers, security analysts and the operations team. A Defense-in-Depth approach must be taken when securing containers. These defensive layers include:

- Securing the container image itself and the software inside,

- Secure communication between the container and the host operating system and between different containers.

- Securing the underlying operating system

- Securing storage configuration.

- Securing container runtime operations in Kubernetes clusters

Securing the container image consists of roughly three steps.

1. Secure the codes and their dependencies

One of the main advantages of using a docker container is to deliver applications faster. However, security should not be the cost for speed. The application code must be secure Insecure functions and dependencies should not be used. The input sanitisation checks should be in a place where the data is inserted in a SQL query or used for executing system commands.

This security control of container images belongs to the developers. While it’s easy for developers to understand their own code, identifying code pieces that may lead to security vulnerabilities is not easy. There are special tools for this purpose that can be integrated into a Continuous Integration and Continuous Development) CICD pipeline.

These tools automatically analyse the application code and identify any code that may lead to a security vulnerability. This testing is also called static application security testing (SAST). SonarQube is one the most well known tools for performing SAST. Another tool for identifying vulnerable dependencies is snyk.io

Utilising these automated SAST tools, the development and integration process can not only be expedited but the development, security analysis, integration and deployment can be performed seamlessly via git commits and Jenkins pipeline.

2. Use a basic and minimalist image from a trusted source

The “FROM” line in your Dockerfile can turn out to be your biggest nightmare. The container image should be built from a trusted build image source. The image should be checked for potential Command and control network communication and potentially malicious processes that may be serving as backdoors.

Luckily, there is a lot of content on Docker Hub by trustworthy vendors. Some of these images are also part of docker curated set of official images.

While there are trustworthy images on Docker Hub, you still need to be careful while picking an image for your particular use case. Just like when installing software from the internet we check the website source and its reputation, we should be as vigilant in selecting docker images.

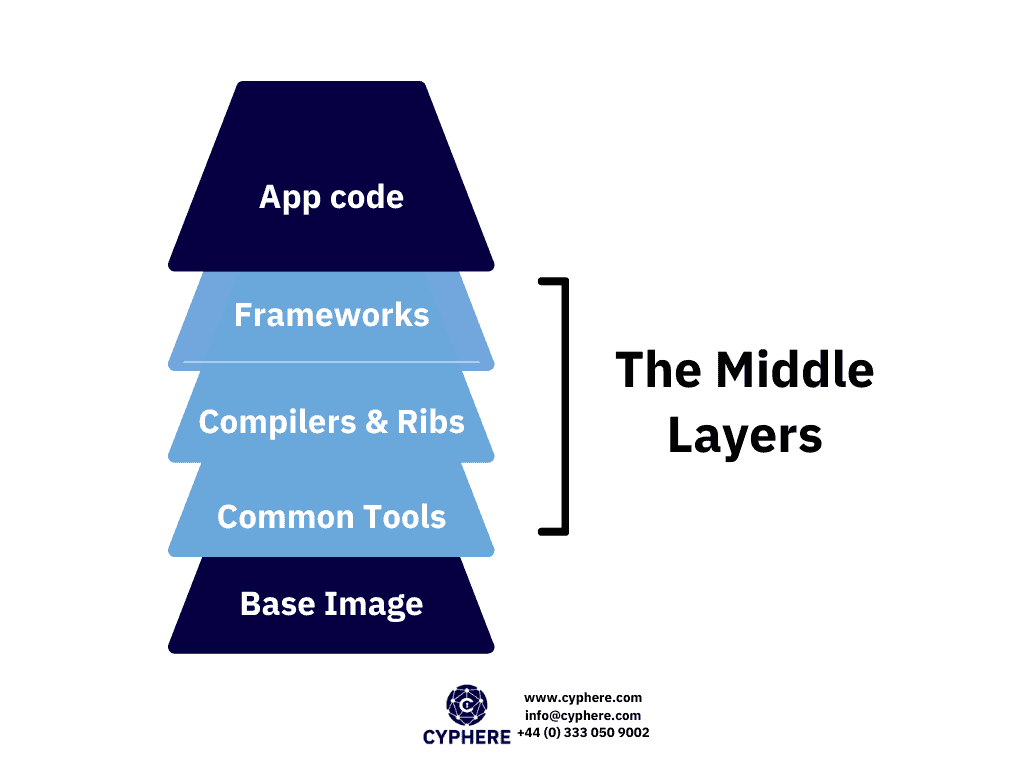

2. Manage the layers in between the base image and the code

When deploying a docker image, out of the box users inherit whatever they are given in the base image, and then build on top of it according to whatever the requirements are. In such scenarios, a slim image although secure might become a burden as the user has to add a lot of layers to the existing image to achieve the desired results.

If a user starts with a slim image, there are high chances that the user would need to add additional tools, libraries, code and various other things. All of these can introduce vulnerabilities.

The good news here is that the user controls all of these middle layers i.e. everything that starts from the firm FROM line all the way to the final Dockerfile lines. Commands including RUN, COPY and ADD are of the most interest here.

At all the stages different tools may be required, but when the images reach their production stages all unnecessary items should be removed.

Patching vulnerabilities in containers

Even with all the security controls in place, security vulnerabilities might still exist within the containers and it is crucial for users to know how to deal with and respond to such scenarios.

Below we have discussed a potential starting point for how to respond in such circumstances during the development, testing and production phases.

Development images

Out of all the images, these will likely have the most vulnerabilities in the middle layers as a lot of tools and support packages would be required during development.

The good news here is that if these additional tools, dependencies and other extra elements are not being included in the production version of the images then it may be safe to ignore many of the vulnerabilities at this stage.

The users should be able to track the dependencies installed so that they are able to make an informed decision if removing those dependencies would not crash the applications. For example, a vulnerable library might be installed as a dependency or a dependency but it is not being consumed. The developers must decide if removing it would not affect the application and clear the vulnerability as well.

Test images

Test images are not much different than development images when we talk about the aspect of considering and mitigating vulnerabilities.

If the user knows a vulnerability exists in the test package that will not be transferred to the production image then the vulnerability may be ignored.

At this stage, it is a good idea to run a comparison between the vulnerabilities ignored in the development stage to those that still exist in the test image. Here you should note that the vulnerabilities you ignored during the development phase should not be a part of the test image.

If these vulnerabilities still exist, you might need to revisit the development stage and adjust the image accordingly.

Production images

These are the images that are most critical and protection of these images is crucial since these images will be deployed live and be used with actual customers or users. Getting down to zero number of vulnerabilities even after removing all the vulnerable dependencies would still be a tedious challenge.

The goal here is to focus on high-risk vulnerabilities especially those with known exploits. If vulnerabilities have been addressed effectively in the development and testing phases, chances are the risks will be substantially reduced.

Best practices to make secure images

Now let’s look at a few of the security best practices you can follow to make your container images more secure:

- Use minimal base images. The larger the image is, the more the chances of a vulnerability existing will be.

- In a Dockerfile, if the USER is not specified that the container runs with a root user. Always make sure that the root user is not used while running containers.

- Always verify the origin from where the container images are pulled and only use trusted sources.

- It is crucial to scan and audit the images periodically to ensure there are no vulnerabilities.

- Linter rules can be defined to avoid common mistakes and implement guidelines that developers can follow.

- Implement robust access control.

- Monitor the health of the images once they are deployed so that any anomaly can be observed and addressed.

- All confidential data should be handled with care so that there is no disclosure of sensitive information.

- All container images, host and relevant technology should be kept up to date.

- For docker images, do not expose the docker daemon socket for remote access.

- The CPU usage and memory should be kept to a minimum level so that even if the container becomes compromised an attacker would not be able to use the host resources.

- Networks for containers should be segregated so that an attacker would not be able to hop from one image to another.

- All unnecessary packages, software, and libraries should be removed from the image.

- The setUID and getUID permissions should be removed from the image.

- Keep packages up to date. The packages added to the docker should be well managed and their security advisories must be checked for any known vulnerabilities.

Conclusion

Containers offer a great deal of ease and user feasibility in today’s fast moving environment, they help developers in creating hassle free environments and make their work more efficient.

Container security is a very vast topic and we have only touched the surface of this. However, the key areas that should be considered when securing images include:

- Start with base images that are pulled from verified trusted sources. Digital signatures can be used to verify the identity.

- Wherever possible opt to use minimal base images with only basic components and build your requirements on top of that

- Check for vulnerabilities at each stage, development, test and production. The vulnerability scanning should be done periodically and also whenever any major changes are made.

- Your organisation should also invest in a robust solution for monitoring and scanning containers to mitigate all possible vulnerabilities and should also employ personnel especially in the DevOps teams who can ensure security is dealt with at each stage.

Shahrukh, is a passionate cyber security analyst and researcher who loves to write technical blogs on different cyber security topics. He holds a Masters degree in Information Security, an OSCP and has a strong technical skillset in offensive security.